SR-SI: The methodology that gives AI persistent memory across any long-running project

-

Moe Hachem

Moe Hachem - February 22, 2026

106x performance improvement. A self-improving loop. And a section nobody expected to write.

V2 is live. Download it below.

Three months of continuous production use. 66,475 lines of code. A codebase, a methodology, and findings that went further than the original paper anticipated.

If you want the paper now, the form is below. This is not a newsletter sign-up, nor will you receive any email from my end - This is just a way to know who’s finding this useful. Other than that, the paper is free to access.

If you’re new here

A codebase is just one example. SR-SI has been applied to digital products, knowledge systems, long-running design projects, and complex document workflows, anywhere an AI agent needs to maintain coherent context across multiple sessions without starting from scratch every time.

The common thread is not the domain. It’s the problem: AI workflows have a memory problem. You explain your project, your architecture, your decisions, and three sessions later the AI asks you the same questions again. Context degrades. Drift accumulates. You end up doing the AI’s orientation work instead of your own.

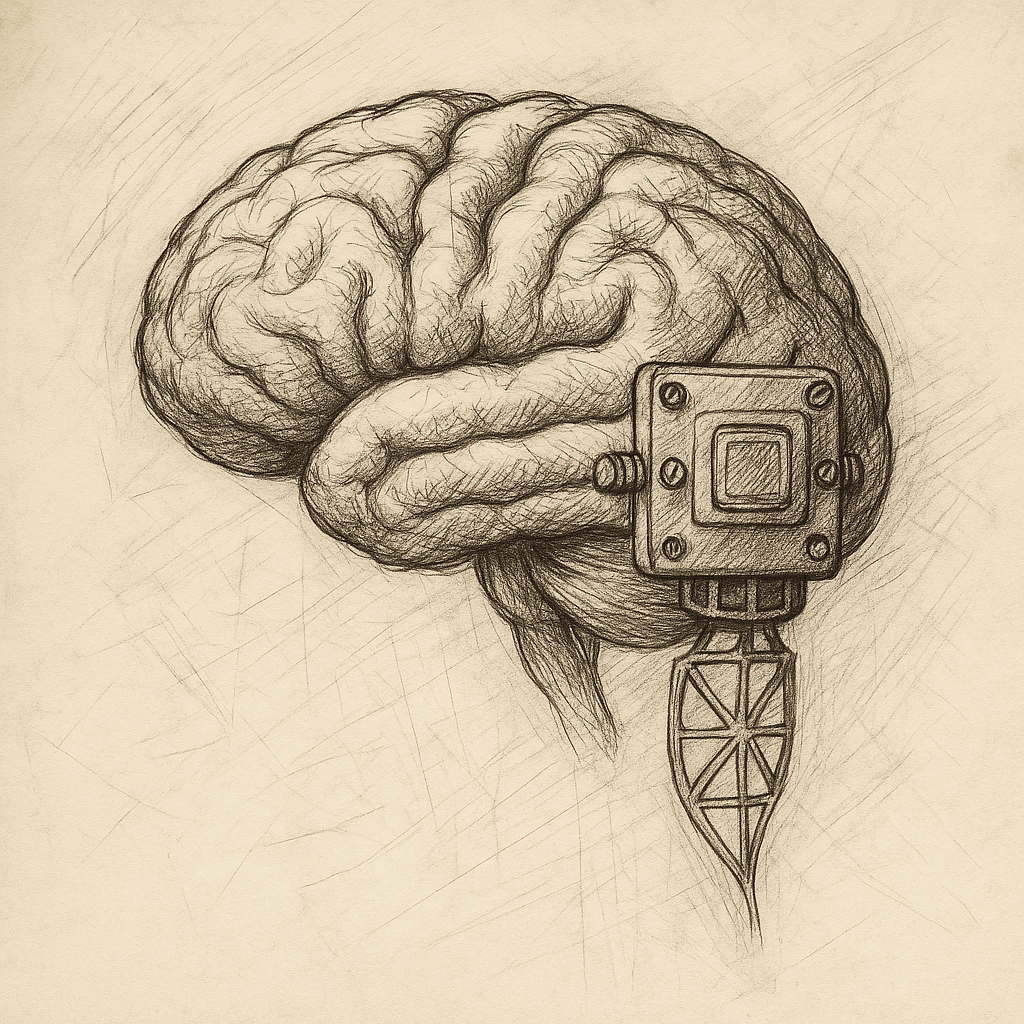

SR-SI — Simulated Recall via Shallow Indexing, solves this without external databases, model fine-tuning, or prompt engineering theater. The AI maintains a structured shallow index of your project, consults it before every task, and updates it as a byproduct of normal work.

The result is persistent, auditable, reconstructable context across sessions, as close to project memory as current AI architecture allows.

The original paper, published November 2025, documented this across three projects and 1000+ prompts. The post-publication findings were more significant.

What changed in V2

The numbers got bigger

The original paper showed a 7x Token Coherence improvement over an unstructured baseline.

A modular index architecture, splitting the monolith index into scoped sub-indices by area — delivered an additional 9.5x improvement on its own, reducing per-task context load from 15,641 tokens to 1,645.

The cumulative improvement from an unstructured conversational workflow to the current architecture is approximately 106x.

That number is derived from the Token Coherence Metric (TCM = LOC / Net Tokens). Baseline TCM of 0.38. Current TCM of 40.4. The methodology for how it was calculated is documented in full.

The maintenance shifted

SR-SI converts memory maintenance from a human-operated process into an agent-operated process with policy-level human control.

The agent maintains the index, flags its own bloat, and proposes its own restructures. The human sets the governance rules and approves changes.

Day-to-day upkeep becomes near-zero marginal human effort.

The system described itself

In February 2026 I ran a structured qualitative probe — asking the AI operating under SR-SI conditions to characterize its own retrieval behavior, failure modes, and confidence calibration.

The most important response: “SR-SI doesn’t change what I am. It materially changes how consistently I can perform over time.”

The experiment stepped into the philosophical

“Section 8: On the Boundary of Functional Identity” documents where three months of daily application eventually led.

What persists across sessions and what doesn’t. The someone-like threshold. The failure mode mirror between AI cognitive drift and human psychological drift. And what it would take for a system like this to develop something resembling continuity of identity.

It is the section I did not expect to write.

A minimal operationalization contract

Section 3.5 documents the five conditions sufficient to begin, regardless of domain, team size, or tool stack.

I took extra care as to not create it as a prescribed setup - but rather just the smallest set of things that need to be true for SR-SI to function.

Download V2

The paper is free. The form below is not a newsletter signup. I will not be sending you emails, nor am I building a mailing list. It exists so I have a rough sense of who is finding this work useful.

What’s next

A companion post that covers how SR-SI works in personal practice: the false starts, the principles that only became visible after violating them, and the setup that emerged from months of daily use.

V3, if it happens, will cover VCS-layer enforcement, confidence tagging, and the initiative question - what changes when the agent stops waiting for prompts and starts maintaining its own memory autonomously. Unless things take a completely different direction.

The experiment is still running, and you’re invited to join in the experimentation to validate, disprove, or help feed into the methodology’s development.

Looking for v1? You can find it here, though v2 was intentionally created in a manner that builds on v1 without redacting or walking back initial claims.