AI doesn’t need an encyclopedia. It needs an index.

-

Moe Hachem

Moe Hachem - April 6, 2026

There’s a metaphor I keep returning to when explaining SR-SI, because it’s not actually a metaphor — it’s a precise description of the architectural choice you’re making every time you work with AI on something complex.

You can give AI an encyclopedia. Or you can give it an index.

Both contain the same information. The usability is completely different.

What an encyclopedia-style approach looks like in practice

The encyclopedia approach: before each session, paste in the relevant code. Include the design doc. Add context from the previous conversation. Explain the project structure. Give the AI everything it might need to answer your question.

This feels thorough. It produces better outputs than starting completely cold. And it fails predictably as projects grow.

The failure mode is token cost. Every piece of context you include consumes tokens before any actual work happens. As your codebase grows from 5,000 lines to 35,000 lines, the amount of context you need to include scales with it. You’re spending an increasing proportion of your context window on orientation rather than production. Eventually you hit the window limit, or the cost becomes prohibitive, or the model starts ignoring the parts of the context that appeared earliest.

More fundamentally: you’re solving the wrong problem. The goal isn’t to give the AI all the information. The goal is to give the AI the ability to find the right information when it needs it.

What an index-style approach looks like in practice

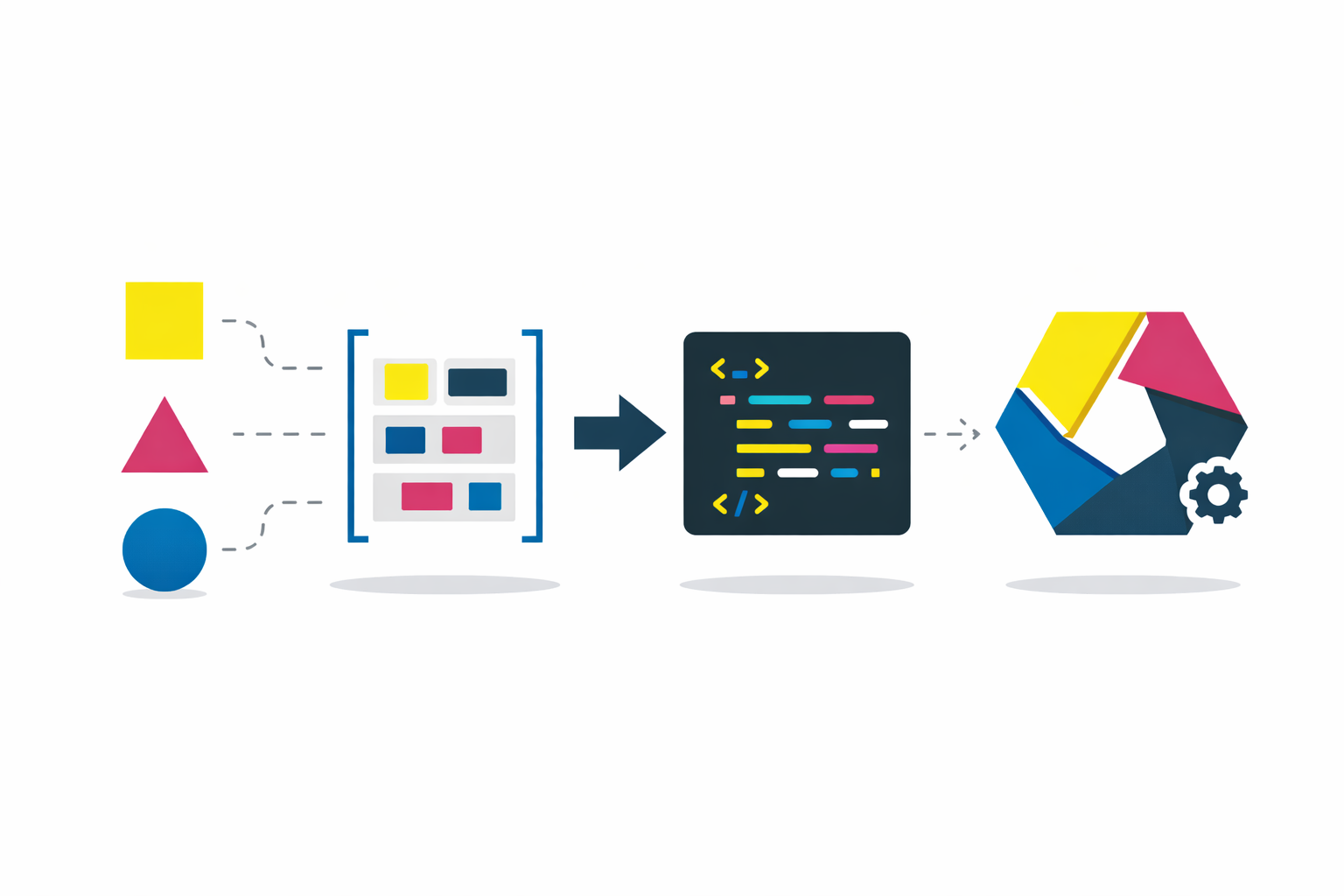

The index approach: before any code is written, you build a compact reference document. One line per file — path and purpose, not content. Architectural decisions recorded in compressed form. Business rules, conventions, cross-component dependencies. The document is machine-optimized, not human-optimized. It’s designed to be read by an AI agent at the start of every task, not by a human trying to understand the system.

The AI reads the index first. Then it retrieves only the specific files relevant to the current task. It doesn’t consume thousands of tokens orienting itself to everything — it spends a few hundred tokens finding exactly where it needs to go.

The index is maintained as the project evolves. When a file moves, the index is updated. When an architectural decision changes, the record changes. The index stays current because it’s a first-class artifact of the development process, not an afterthought.

What this costs to build and what it returns

Building the initial index takes time — typically a few hours for a moderately complex project. That upfront cost is recovered quickly. Each session that doesn’t require re-explanation of established architecture is tokens saved. Each context-loss event that doesn’t happen is hours of rework avoided.

Across the three projects where I measured this, the modular index variant reduced per-task context load from 15,641 tokens to approximately 1,645 tokens on a 66,000-line codebase. That’s not a marginal improvement. It’s a structural change in how much of your context window is available for actual work.

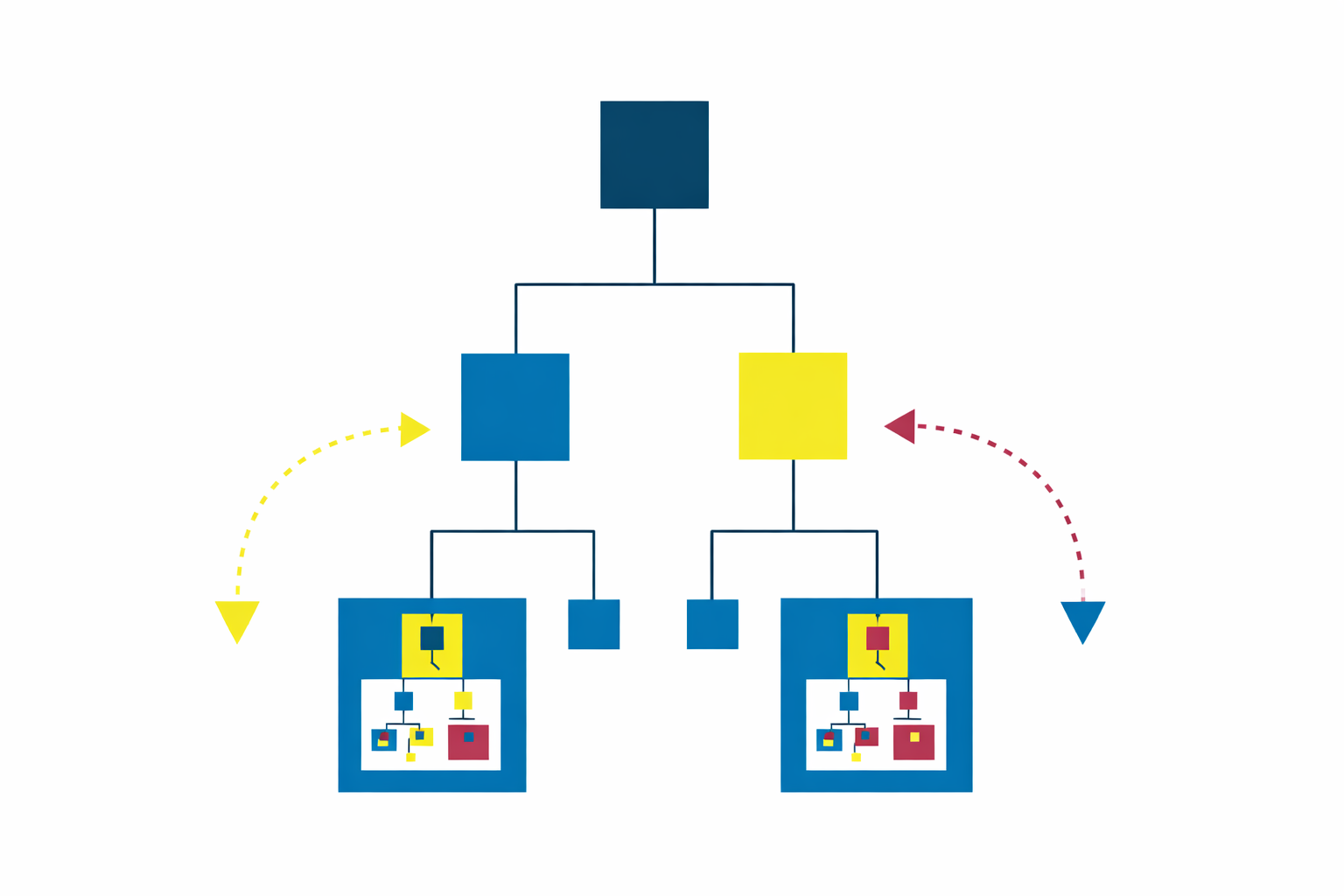

The encyclopedia approach scales poorly. Every additional line of code makes context management more expensive. The index approach scales independently of project size — the index stays compact because it’s a navigation aid, not a copy of the codebase.

The broader principle

This isn’t just an AI development technique. It’s the same principle that makes good documentation valuable, good onboarding efficient, and good knowledge management systems actually usable.

The question is never “how do we give people more information?” It’s “how do we make the right information findable at the moment it’s needed?” Those are different design problems with different solutions. Most teams are building encyclopedias when they need indexes.

SR-SI: The methodology that gives AI persistent memory across any long-running project