How I maintain coherence across 66,000 lines of code without losing the thread

-

Moe Hachem

Moe Hachem - February 25, 2026

Most AI-augmented development workflows break somewhere between promt 50 and 200, or as I’ve come to call it: The context wall. This isn’t because the model gets worse, but a context problem.

The context — the accumulated understanding of what you’re building, why decisions were made, what was tried and abandoned - has no stable home. Every session, you re-explain, and with every new model switch, you start over, and the same is true with every AI collaborator you bring in. The context in this situation lives only in your head.

I’ve been running a 66,000+ line production codebase with AI as a core collaborator across multiple months and model versions. The context hasn’t decayed yet, and token efficiency has improved astronomically.

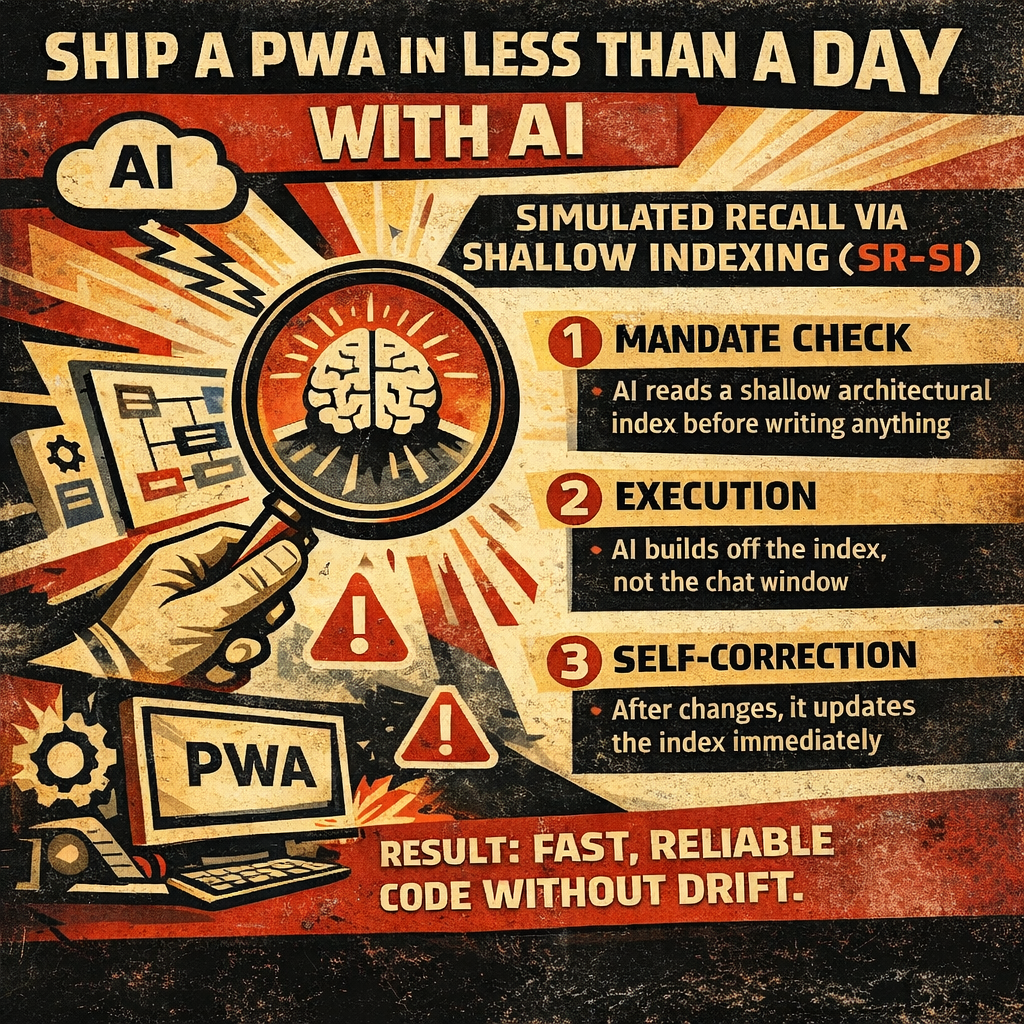

Here’s the system behind that: The SR-SI methodology - Simulated Recall via Shallow Indexing - works on a simple architectural principle: instead of trying to give AI everything, you give it a navigation layer that lets it find what it needs.

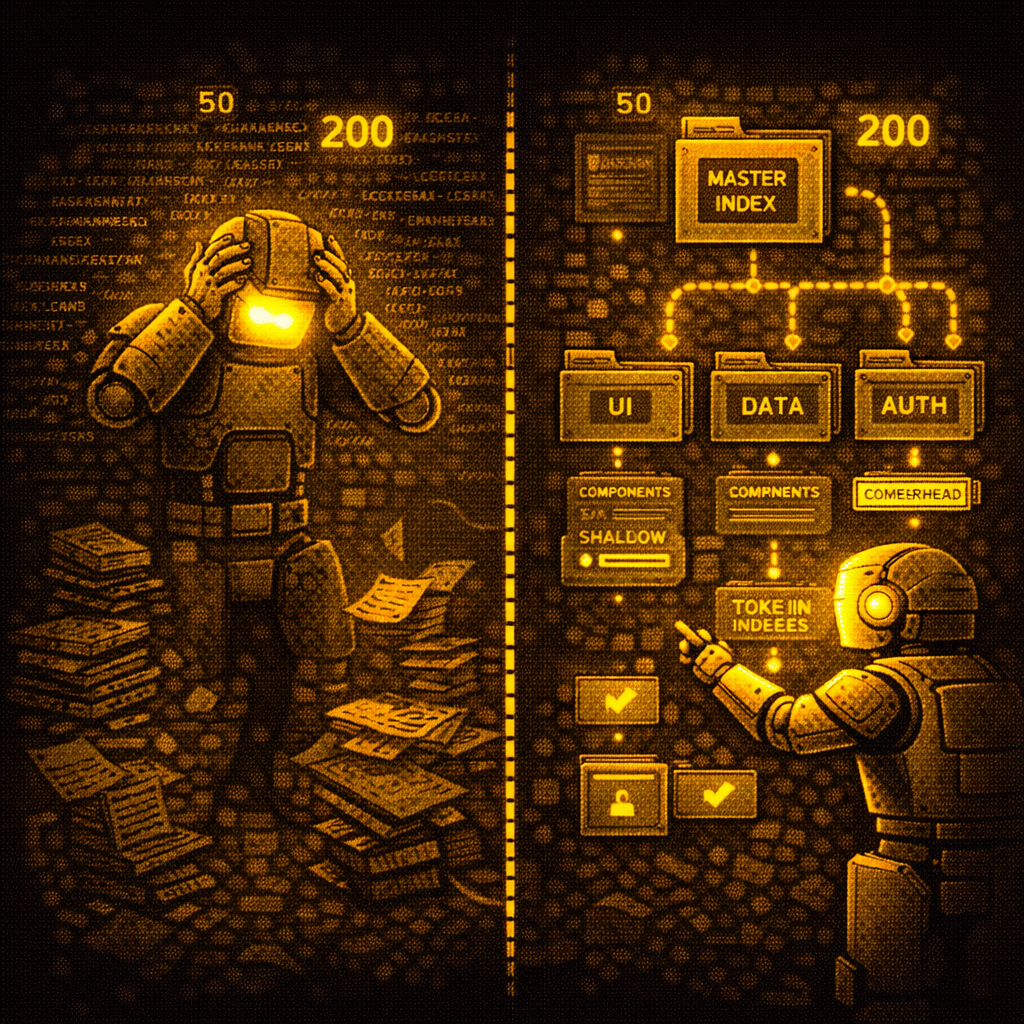

A shallow project index lives at the top level. It contains no implementation detail. What it contains: what each part of the system is responsible for, how the parts relate, and where to go for more depth on any given scope. Next you can create sub-indices that live at the component/segment level and contain the relevant detail for that scope only.

When AI begins a task, it reads the shallow index first. It orients so that it knows where it is before it starts moving, and where it last left off.

The result is three measurable things:

- Token overhead drops dramatically: AI isn’t consuming the entire codebase to answer a scoped question.

- Coherence persists across sessions: The index is the memory, not the model’s context window.

- Model switching becomes trivial: The project’s identity lives in the index, not in any particular conversation.

The 106x improvement in cumulative token coherence I documented in the SR-SI white paper came from applying this structure across a project with real complexity, measuring before and after, and comparing against baseline unstructured development.

This isn’t theoretical. It’s the architecture running every product I’m currently building.

The full methodology, including the index structure and maintenance protocol, is documented at mghachem.com. If you’re hitting the 200-prompt wall in your own work, that’s where to start.

SR-SI: The methodology that gives AI persistent memory across any long-running project