The problem with AI isn't intelligence. It's orientation.

-

Moe Hachem

Moe Hachem - February 24, 2026

Every team I talk to has the same complaint:

The outputs are generic. The AI sounds confident but misses what matters, there’s heavy editing required, and we need to go back to square one.

They blame the model. They blame their prompts. They upgrade to a better version and get slightly better generic outputs.

The problem isn’t intelligence though. Every major model you’re using right now is extraordinarily capable. The problem is that it starts from the centre of all human knowledge - not the centre of your problem.

Think about what happens when you open a new session: The model has no idea who you are, what you’ve built, what decisions you’ve already made, what failed last quarter, what your product actually does, or what your team calls things internally. It defaults to the safest, broadest answer it can construct from everything it knows.

That answer will be coherent, well-written, and it will miss the point. This is an orientation problem, not a prompting problem. Prompting optimises a broken interaction, but orientation fixes the foundation.

The question most teams ask is: how do I prompt AI better? The question that actually produces different results is: what does AI need to know about us, and how do we make that knowledge findable?

Those are not the same question. The first is about technique, while the second is about architecture.

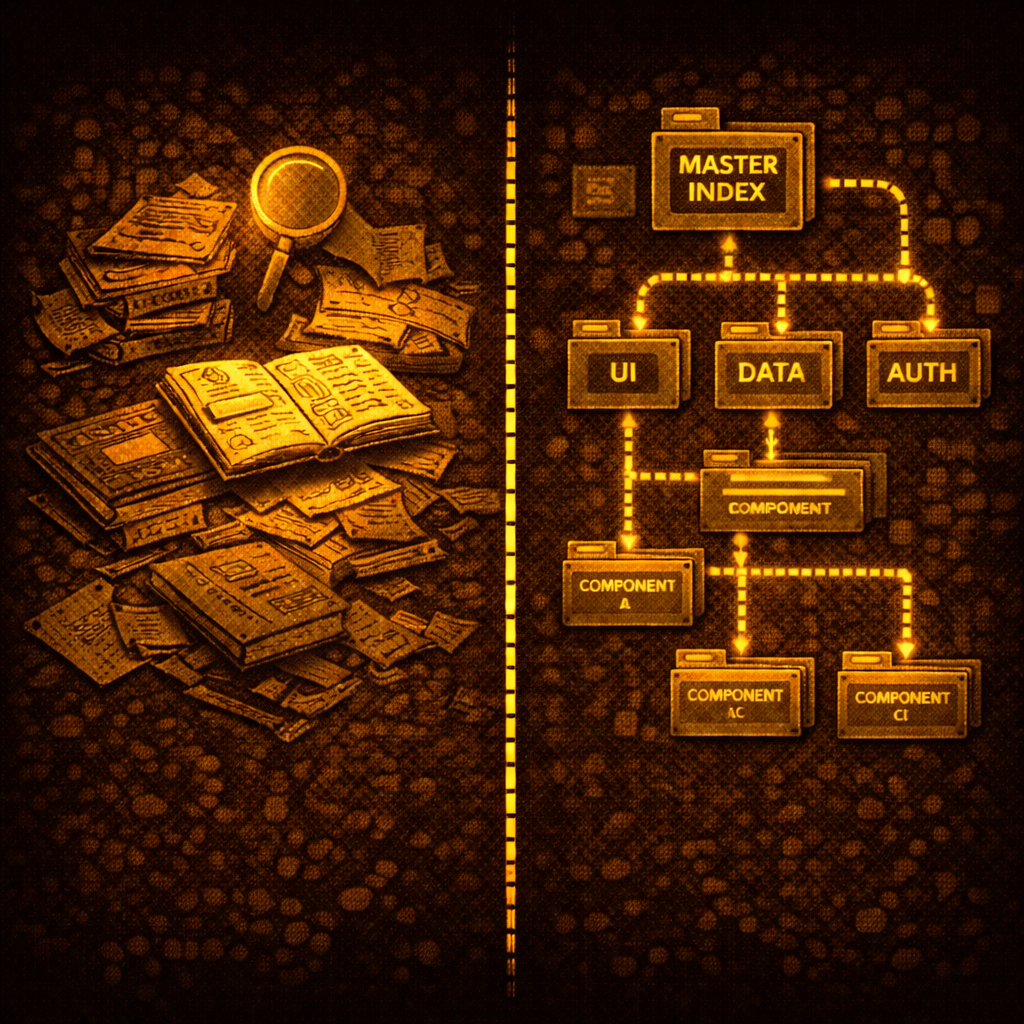

Architecture is what I’ve been building for the past few months - obsessively - a methodology called SR-SI (Simulated Recall via Shallow Indexing) that gives AI a structured way to navigate your specific context before it executes any task.

SR-SI is not a prompt library, nor is it a set of templates. It’s a navigable index of what matters, organised the way AI actually retrieves information.

The outputs? They’re not incrementally better - They are categorically different.

Over the next several weeks I’m going to document exactly how this works, where it came from, and what it’s produced across real projects. Starting with the problem - because if you don’t understand why AI fails, you’ll keep fixing the wrong thing.

YOu can find the full methodology here:

SR-SI: The methodology that gives AI persistent memory across any long-running project