The AI isn't an oracle, it's a collaborator

-

Moe Hachem

Moe Hachem - February 23, 2026

The AI isn’t an oracle, it’s a collaborator.

There’s a fundamental difference between consulting an external oracle and conversing with an embedded collaborator. Problem is, most AI tools are optimized for the first, while the best work happens in the second.

Here’s what I’ve learned building complex systems with AI:

The AI shouldn’t be external to your project. It should be embedded in it.

When you treat AI as an external oracle, you’re asking it to generate comprehensive plans for a system it’s never seen. It has no project context, so it simulates understanding through verbosity. The output might be detailed, but it’s disconnected from your actual codebase, constraints, and patterns.

You get synthetic certainty from actual ignorance.

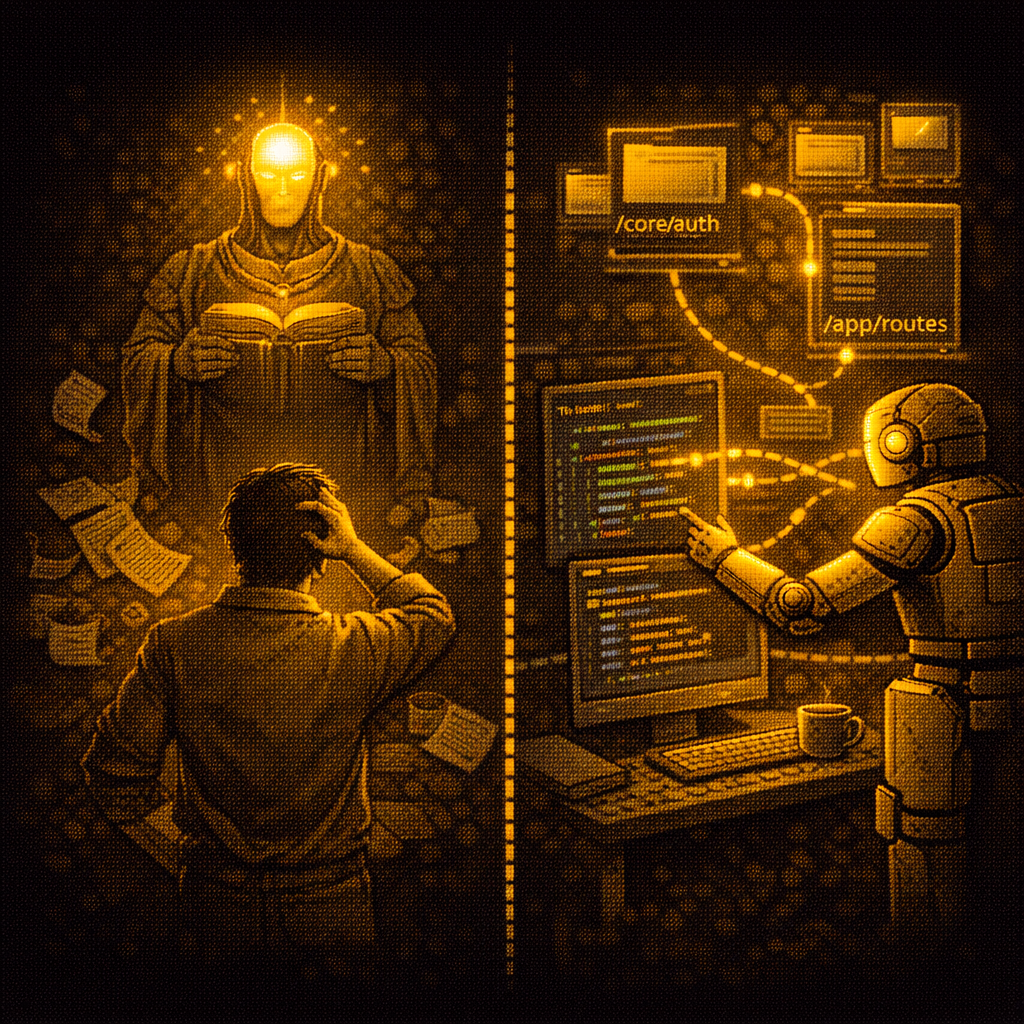

When AI functions as an embedded collaborator, something different happens. It maintains persistent memory of the project. It knows where components live and why. When you ask it something, it’s building from lived context, not first principles. You get structural continuity across sessions.

The difference in practice is stark.

Ask an oracle to “generate a complete authentication system spec” and you’ll get ten pages of documentation that ignores your existing auth patterns and proposes solutions incompatible with your stack. You’ll spend more time adapting the spec than just building.

Ask a collaborator “We need to add OAuth - you know our setup, what’s the integration path?” and it checks where auth currently lives, identifies your session management approach, and proposes changes that fit your actual structure. You’re iterating on real constraints, not imagined ones.

This is the lived experience distinction.

- An oracle generates knowledge.

- A collaborator remembers experience.

When the AI has lived experience of your project, it knows auth lives in /core/auth because of async constraints. It remembers you refactored that component because of race conditions. It understands this pattern appears in three places and they’re interconnected for a reason.

That’s not a spec. That’s institutional memory.

The token economics are completely different too:

- The oracle model burns 5000+ tokens generating a spec from scratch because it must simulate everything.

- The collaborator model maintains a compact index that the AI consults before every task. It’s compact because it’s real, not simulated.

When you’re iterating on a complex system, you don’t need comprehensive specs. You need continuity across sessions. You need the AI to remember what you built yesterday so you can keep building today without reloading your entire mental model.

I don’t want a consultant who generates impressive documents. I want a collaborator who remembers the structure we’re building together. Someone who knows why we made that tradeoff, where that constraint came from, how these components interlock.